We’re pleased to share that our recent journal paper “A Range-Aware Attention Framework for Meteorological Visibility Estimation,” which has just been accepted in March 2026, in MDPI Sensors Journal. This is a paper collaborating with City University of Hong Kong and Education University of Hong Kong, Hong Kong:

- Wai Lun Lo, Kwok Wai Wong, Richard Tai Chiu Hsung, Henry Shu Hung Chung, Hong Fu, Harris Sik Ho Tsang, and Tony Yulin Zhu, “A Range-Aware Attention Framework for Meteorological Visibility Estimation,” MDPI Sensors, 26(6), 1893, 2026, doi: 3390/s26061893.

Paper Link: https://www.mdpi.com/1424-8220/26/6/1893

Paper Abstract

Meteorological visibility estimation is to estimate how far we can see in different weather conditions. Estimating it accurately is important for safe transportation and environmental monitoring. However, even advanced deep learning models often perform poorly when the visibility changes in complex, non-linear ways due to fog, haze, or other atmospheric effects. Another challenge is the limited availability of datasets that combine images with precise, sensor-based visibility measurements.

In this study, we make two main contributions. First, we built a new dataset called the Hong Kong Chu Hai College Visibility Dataset (HKCHC-VD). It contains over 11,000 high-quality images, each matched with accurate visibility readings collected from a professional Biral SWS-100 weather sensor.

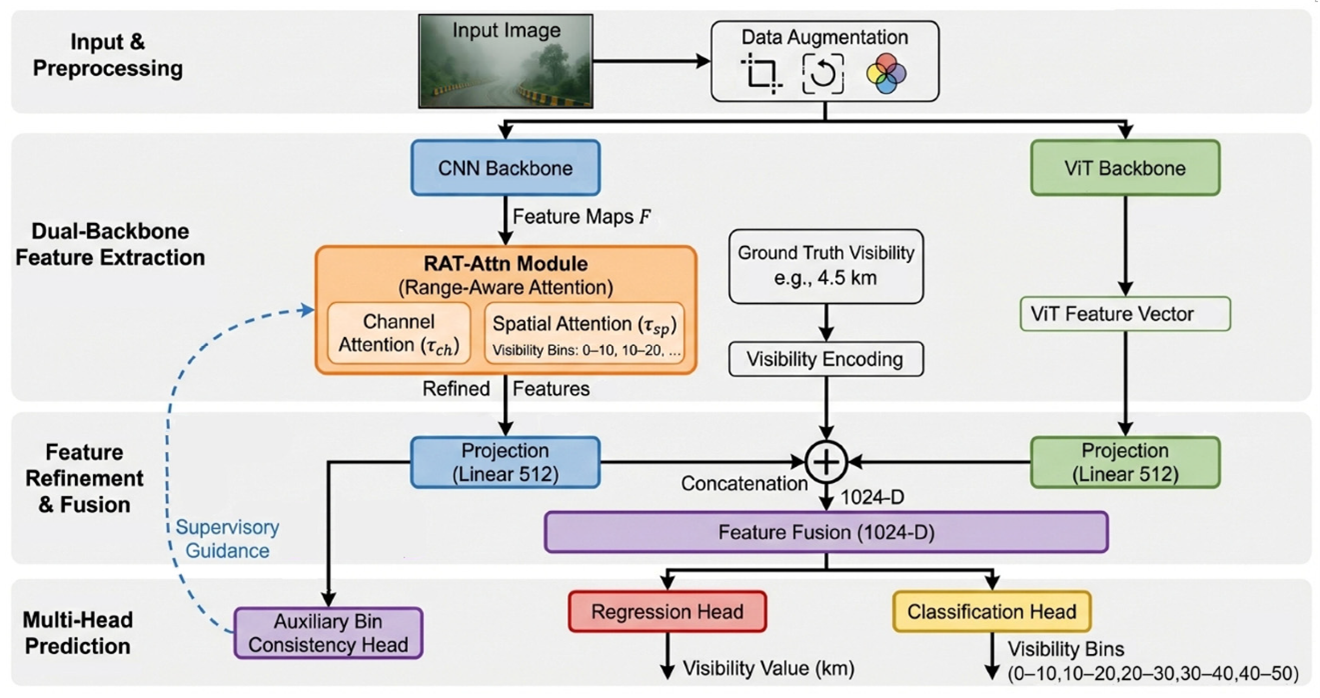

Second, we developed a new deep learning framework called Range-Aware Attention (RAT-Attn). This system adds meteorological understanding directly into a deep model by using a special attention mechanism that adapts to different visibility ranges. It combines two types of networks—a Convolutional Neural Network (CNN) and a Vision Transformer (ViT)—and includes a learnable threshold that helps the model focus on the right image features for different distances.

Experiments show that RAT-Attn clearly outperforms existing visibility estimation models (like VisNet and older ANN-based models). The best-performing version (ResNet + ViT with spatial thresholds) achieved high accuracy, with a Mean Squared Error of 5.87 km², a Mean Absolute Error of 1.65 km, and 87.07% accuracy in classification tasks. Notably, in low-visibility situations (0–10 km), it reduced errors by more than 75% compared to previous methods.

We will keep advancing our collaboration with leading research institutions to develop cutting-edge technologies!

The research team members

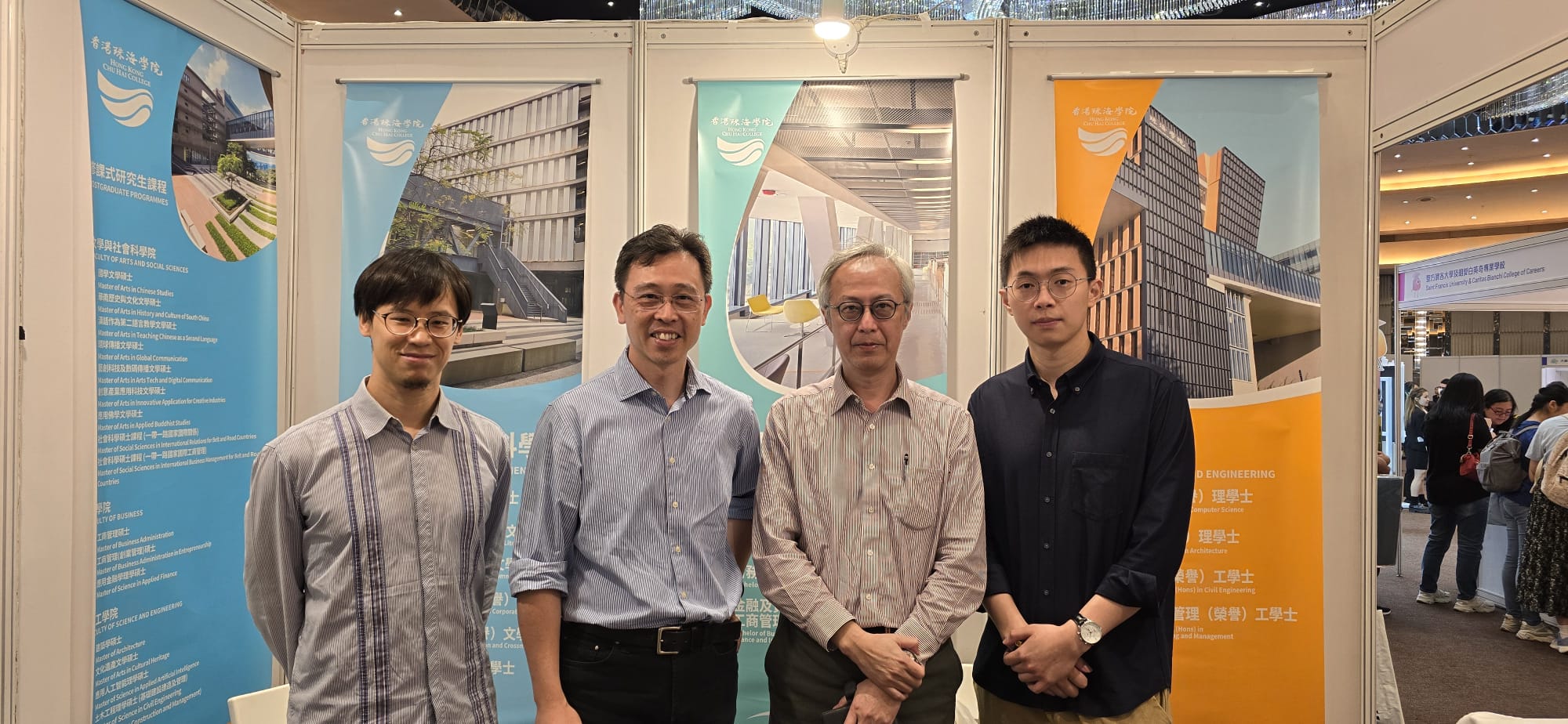

Hong Kong Chu Hai College

Prof. Wai Lun Lo, Professor and Head of the Department of Computer Science

Mr. Kwok Wai Wong, Research Assistant in the Department of Computer Science

Dr. Richard Tai Chiu Hsung, Associate Professor in the Department of Computer Science

Dr. Harris Sik-Ho Tsang, Assistant Professor in the Department of Computer Science

Dr. Tony Yulin Zhu, Assistant Professor in the Department of Computer Science

City University of Hong Kong

Prof. Henry Shu Hung Chung, Chair Professor in the Department of Electrical Engineering

Education University of Hong Kong

Dr. Hong FU, Associate Professor in the Department of Mathematics and Information Technology

Some photos regarding the academic paper

The end-to-end architecture of our proposed Range-aware Attention Framework (RAT-Attn).

The end-to-end architecture of our proposed Range-aware Attention Framework (RAT-Attn).

BIRAL SWS-100 Visibility Sensor.