We’re pleased to share that our recent journal paper “Multi-Branch Aesthetic and Technical Perspectives with Cross Tri-Fusion Attention for No-Reference Audio-Visual Quality Assessment,” which has just been accepted this month (March 2026), in IEEE Transactions on Circuits and Systems for Video Technology (TCSVT). This is a paper collaborating with The Hong Kong Polytechnic University:

- Ngai-Wing Kwong, Yui-Lam Chan, Ziyin Huang, and Sik-Ho Tsang, “Multi-Branch Aesthetic and Technical Perspectives with Cross Tri-Fusion Attention for No-Reference Audio-Visual Quality Assessment,” IEEE Transactions on Circuits and Systems for Video Technology, doi: 1109/TCSVT.2026.3674552.

https://ieeexplore.ieee.org/document/11435472

Paper Abstract

In today’s world of rapidly spreading information and the dominance of short videos on social media platforms such as Instagram, YouTube, and TikTok, ensuring high-quality audio and video has become increasingly important. Traditional quality assessment methods usually focus only on one part—either the sound or the visuals—and then combine the results. However, this approach misses the way audio and visuals interact with each other, which affects how viewers experience a video.

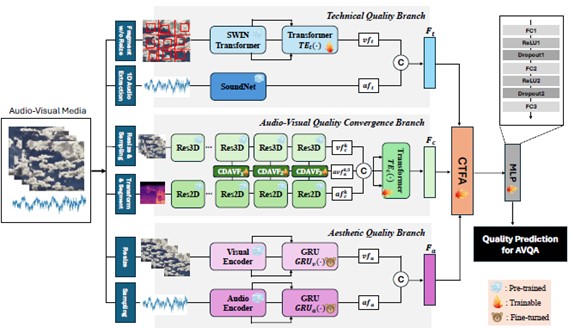

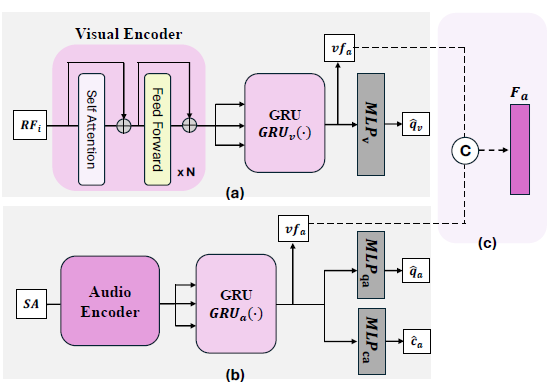

To address this, we developed a new model that evaluates both audio and video quality together. It uses multiple parts (or “branches”) that each focus on different aspects of quality. One part uses a new technique we call Cross-Dimensional Audio-Visual Fusion (CDAVF), which helps the model understand how sound and visuals influence each other. Another part checks for technical problems like distortion or noise, while a third part focuses on how pleasant or meaningful the content appears to people.

After gathering all this information, our model uses another new method called Cross Tri-Fusion Attention (CTFA) to combine everything intelligently, so it can make more accurate quality judgments.

We tested our model using professional and user-generated video datasets and found that it consistently performed better than existing methods. This shows that our approach is both more accurate and more dependable for assessing the overall quality of audio-visual content.

We will keep advancing our collaboration with leading research institutions to develop cutting-edge technologies!

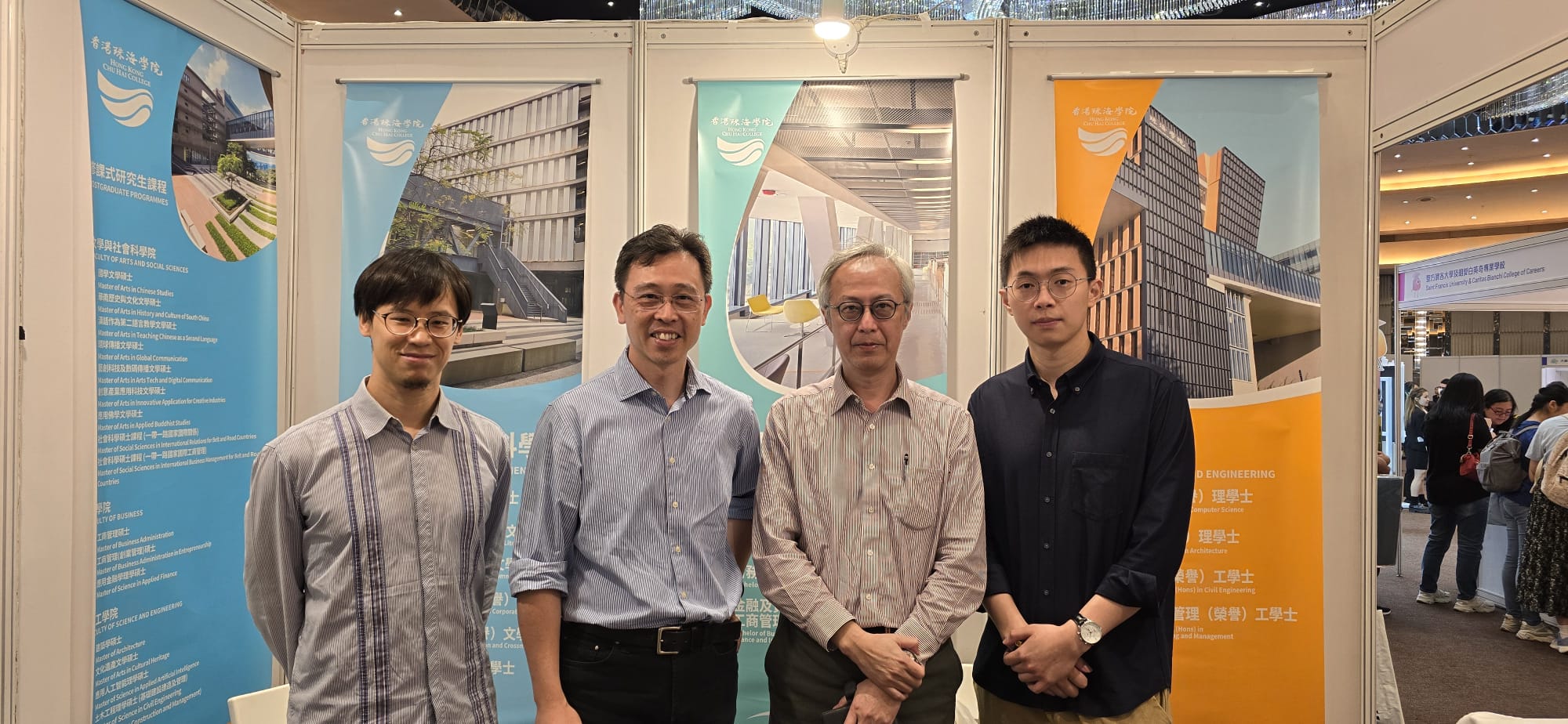

The research team members

Hong Kong Chu Hai College

- Dr Harris Sik-Ho Tsang, Assistant Professor in the Department of Computer Science

The Hong Kong Polytechnic University

- Dr Ngai-Wing Kwong, Postdoctoral Fellow in the Department of Electrical and Electronic Engineering

- Dr Yui-Lam Chan, Associate Professor and Associate Head in the Department of Electrical and Electronic Engineering

- Dr Ziyin Huang, Postdoctoral Fellow in the Department of Electrical and Electronic Engineering

Some photos regarding the academic paper

The overall framework of our proposed NR-AVQA neural network model.

Our proposed fine-tuning processes in the aesthetic quality branch